Publications

Teaching LLMs to unveil tendentious implicit contents of Italian political communication

A study on instruction-tuned Llama 3.1 and Qwen 2.5 models evaluated on the IMPAQTS-PID benchmark for implicit content interpretation. It shows that these smaller fine-tuned models clearly outperform much larger models in a zero-shot setting.

Evaluating the abilities of LLMs and SpeechLMs in discovering implicit contents of Italian political speeches

A study evaluating how well LLMs and SpeechLMs identify implicit meanings in Italian political discourse using the multimodal IMPAQTS-PIDMM benchmark. Results show that text-only models outperform multimodal ones in this task.

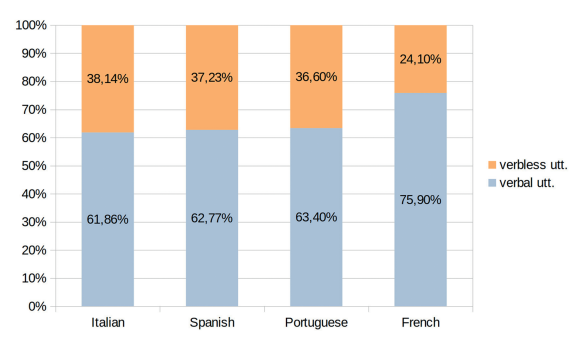

IMPOLS at EVALITA 2026: Overview of the IMPOLS Task

An overview of the IMPOLS shared task at EVALITA 2026, focused on the automatic detection and classification of implicit, potentially manipulative content in Italian political speech.

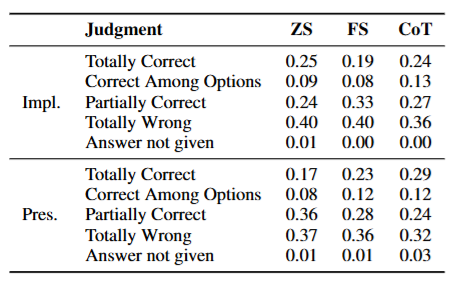

They want to pretend not to understand: The Limits of Current LLMs in Interpreting Implicit Content of Political Discourse

A study on the ability of Large Language Models (LLMs) to interpret implicit content in real-life political discourse. It highlights significant limitations in their pragmatic understanding.

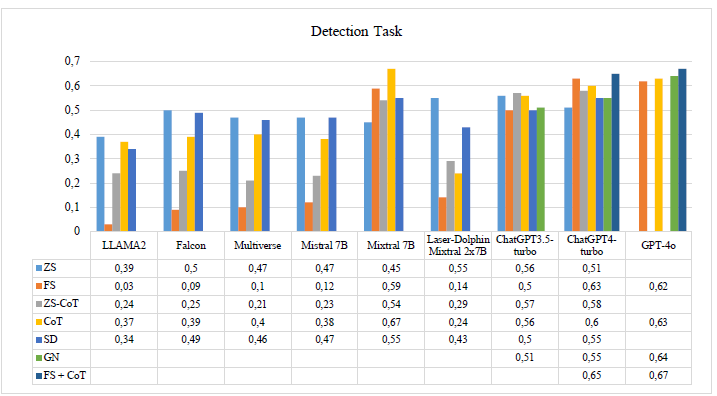

“It’s a further exercise in futility”: implicit content detection and classification in Italian political discourse. A pilot study.

A study on Large Language Models' (LLMs) ability to process implicit meaning in political discourse, evaluated across nine multilingual models on binary detection and classification tasks. The models are tested with seven different prompting techniques.

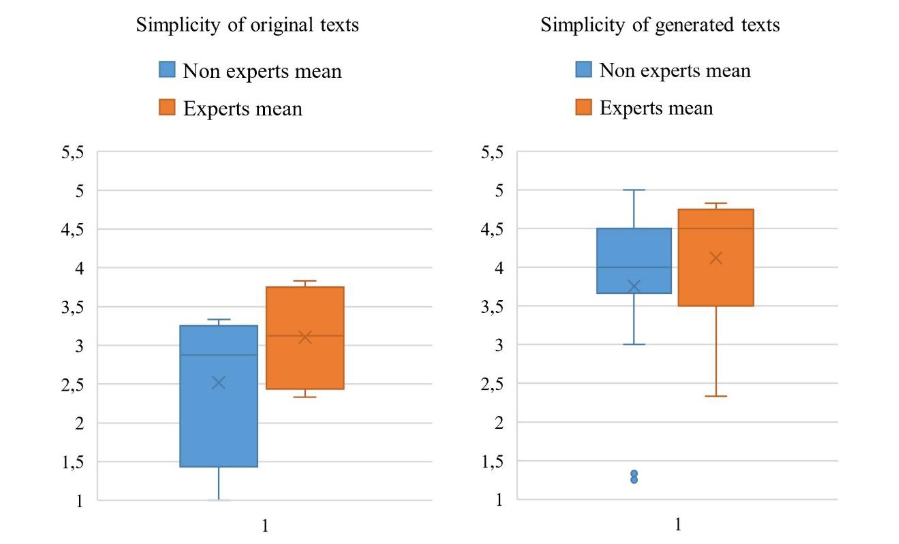

Valutazione di tecniche di prompt engineering per la semplificazione dell'italiano burocratico e professionale

An expert evaluation study on the automatic text simplification capabilities of ChatGPT on Italian bureaucratic texts.

Exploiting ChatGPT to simplify Italian bureaucratic and professional texts

A study on the use of ChatGPT to simplify complex administrative and legal Italian texts, evaluating the model's effectiveness in rephrasing long sentences and nominal clusters with zero-shot, few-shot, and Chain-of-Thought prompting.